How do you build computational graph in TensorFlow?

TensorFlow uses a dataflow graph to represent your computation in terms of the dependencies between individual operations. This leads to a low-level programming model in which you first define the dataflow graph, then create a TensorFlow session to run parts of the graph across a set of local and remote devices.

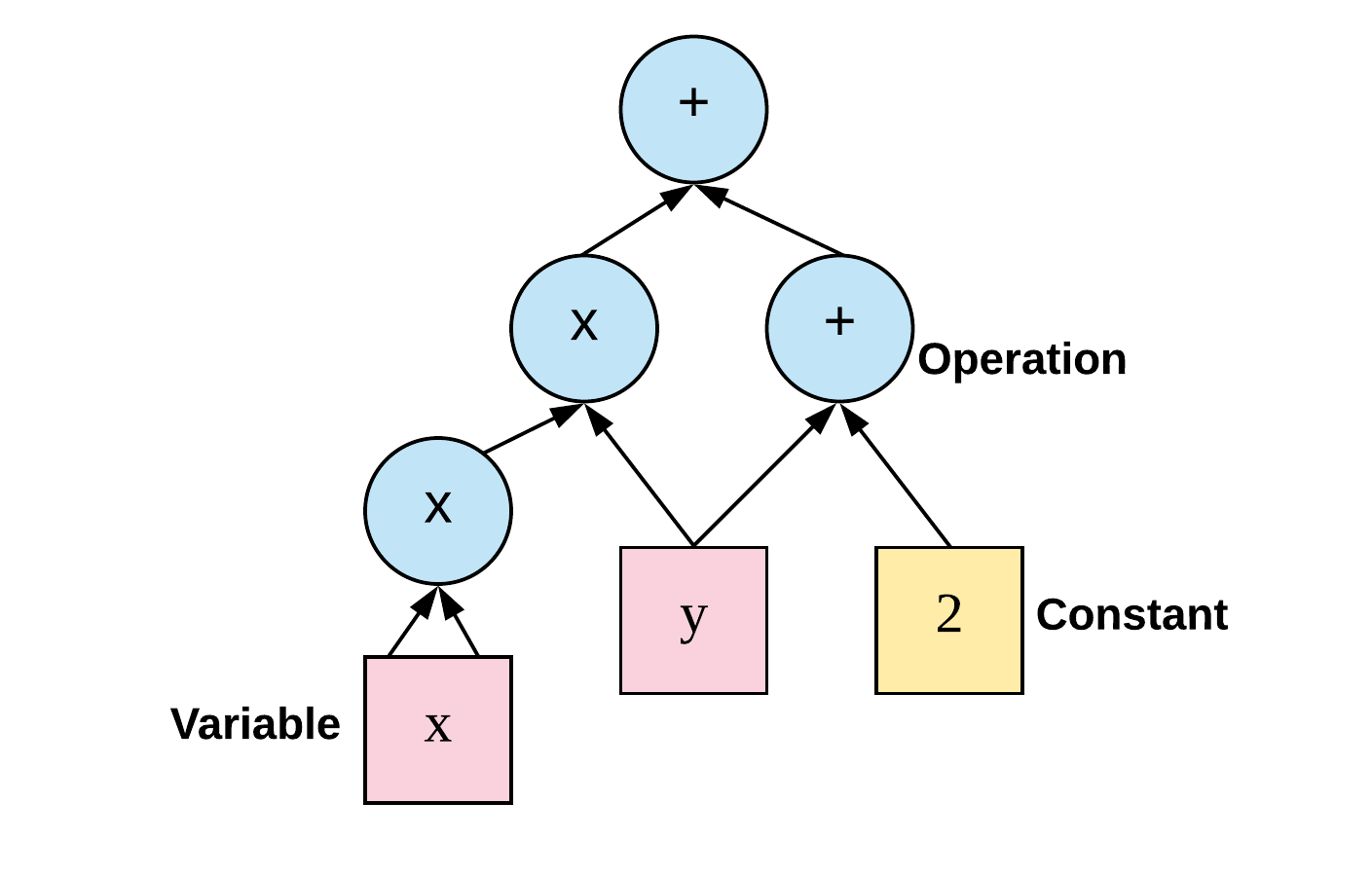

Dataflow is a common programming model for parallel computing. In a dataflow graph, the nodes represent units of computation, and the edges represent the data consumed or produced by a computation. For example, in a TensorFlow graph, the tf.matmul operation would correspond to a single node with two incoming edges (the matrices to be multiplied) and one outgoing edge (the result of the multiplication).

Dataflow has several advantages that TensorFlow leverages when executing your programs:

Parallelism. By using explicit edges to represent dependencies between operations, it is easy for the system to identify operations that can execute in parallel.

Distributed execution. By using explicit edges to represent the values that flow between operations, it is possible for TensorFlow to partition your program across multiple devices (CPUs, GPUs, and TPUs) attached to different machines. TensorFlow inserts the necessary communication and coordination between devices.

Compilation. TensorFlow’s XLA compiler can use the information in your dataflow graph to generate faster code, for example, by fusing together adjacent operations.

Portability. The dataflow graph is a language-independent representation of the code in your model. You can build a dataflow graph in Python, store it in a SavedModel, and restore it in a C++ program for low-latency inference.

A computational graph is a series of TensorFlow operations arranged into a graph of nodes.

import tensorflow as tf

# If we consider a simple multiplication a = 2 b = 3 mul = a*b

print ("The multiplication produces:::", mul)

The multiplication produces::: 6

# But consider a tensorflow program to replicate above at = tf.constant(3) bt = tf.constant(4)

mult = tf.mul(at, bt)

print ("The multiplication produces:::", mult)

The multiplication produces::: Tensor("Mul:0", shape=(), dtype=int32)Each node takes zero or more tensors as inputs and produces a tensor as an output. One type of node is a constant. Like all TensorFlow constants, it takes no inputs, and it outputs a value it stores internally.