Explain in detail about Gradient Descent?

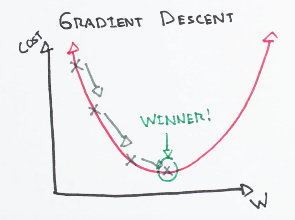

Gradient descent is a first-order optimization algorithm. It is dependent on the first order derivative of a loss function. It calculates that

Share:

Gradient Descent & Stochastic Gradient Descent

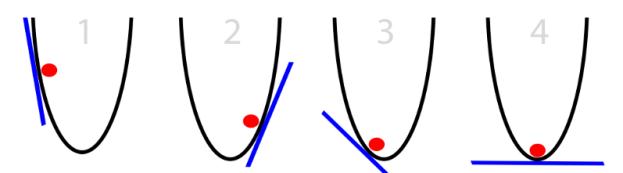

The pre-requisites for this article: 1. Enough knowledge on the terms like Model parameters,Cost function2. Calculus-First order derivatives(chain rule and power

Share: