All about auto-encoders

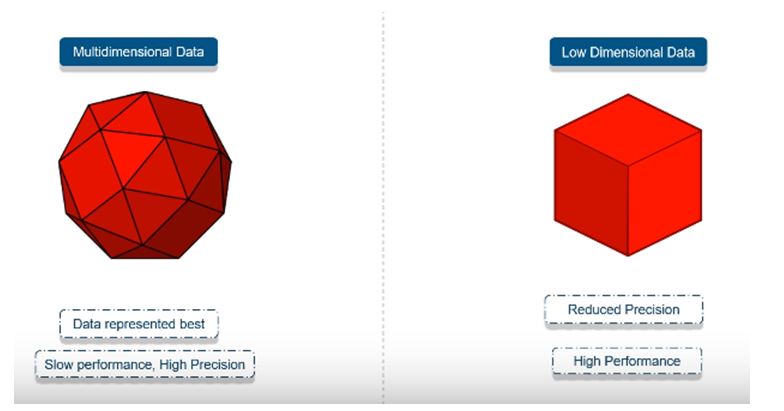

In this tutorial we will deal with Auto Encoders and how are they used in dimensionality reduction.When working with real problems and real data we often deal with high dimensional data that go up to millions. while in its original high dimensional structure the data represents itself best and sometimes we might need to reduce its dimensionality the need to reduce the dimensionality is often associated to visualizations but that is not always the case sometimes we might value performance over precision.

so we could reduce thousand dimensional data into ten dimensional data so that we can manipulate them faster now the aim of the auto encoders is to learn a compressed distributed representation for given data but we already have principle component analysis(PCA) for that then why do we need auto encoders because PCA is restricted to linear while auto encoder have nonlinear encoder and decoder.

Additionally with an increasing amount of features PCA results slow performance but despite the fact the practical applications of auto encoders are pretty rare some time back. Today data de-noising and dimensionality reduction for data visualization are considered as two main interesting practical applications of auto encoders with appropriate dimensionality and sparsely constraints Auto encoders can learn data projections that are more interesting than PCA and other techniques

So, lets have a detail look into what are auto encoders these are simple networks that aims to transform inputs into outputs with minimum possible error. we want the output to be as close as possible with inputs.

For that we will add some couple of layers in between input and output and the sizes of these layers are less that the input layer let’s say input layer has the dimensionality on N then output layer dimensionality of N now we can make the input go through the layer of size P where P<N and we ask the network to recreate the input.

Now lets dive into the working of these encoders to reconstruct the input.the main goal of these neural networks is to take unlabel inputs,encode them and try to reconstruct them afterwards based on the most valuable features identified in the data.

Now mathematically speaking encoder is a model that takes vecot input “x” and maps into a hidden representation “h” using encoder.this hidden representation ”h” usually called “code”.which can be mapped back into the space of “x” using decoder.Now the goal of the auto encoder is to minimize the reconstruction error which is the measured distance between input “x” and output ”r”.The code is typically has less dimensions then “x”.The encoder compresses the input into latent-space representation and then decoder transforms it into output.

“Auto-encoders are shallow networks with an input layer,few hidden layers and an output layer”

Applications of Auto-Encoders

So,auto encoders are used to convert any black-and-white image into color images. Depending on what is in the picture it is possible to tell what the color should be

The second application is Feature variation in this scenario it extracts the required features of an image and generate the output image without any noise and unnecessary interruption.

Third one is dimensionality reduction by using the auto encoders it is easy to reconstruct the similar image along with reduced dimensional value.

The next application is Denoising images, during the training the auto encoders learn to extract important features from input images and ignore the image noises because the labels have no noises the input seen by auto encoders is not the raw input but stochastically corrupted version.

We can also remove the watermark from an image or unwanted patterns from the required images.

Types of Auto-Encoders

Convolutional Auto-encoders:

These encoders uses the convolution operator which allows filtering the input signal to extract some part of its content.Auto encoders in their traditional formulation do not take into account the fact that the signal can be seen as sum of other signals .These convolution auto encoders use the convolution operator to exploit the observations.

“O(I,j)”- input pixal in i&j

“F” – convolution filter

“I” – input image

Variational Auto-encoders:

They let us design complex generative model os data and fit them to large datasets. They can generate the faces of fictional celebrities in high resolution digital art work.

Deep Auto-encoders:

A deep auto encoder comprises of two symmetrical deep-belief networks.the first four or five shallow layers represents the encoding half of the net and second set of four or five layers that make up the decoding half.

These layers are restricted Boltzmen machines building blocks of deep belief networks with several peculiarities.

Contractive Autoencoders:

These are type of regularized auto encoders .these regularized auto encoders try to learn latent variables but in a different way rather than limiting the no.of latent variables they add some sort of penalty or noise to the loss function. Contractive