Types of Regression Techniques

Regression analysis is an important tool for modeling and analyzing the data. And it gives the relationship between the dependent[target labels] and independent variables[predictors]. Basically it’s a predictive modelling technique which gives the relation between predictors and target labels.this is used for only predicting the continuous target variables.

By using regression analysis we can have the

Significant relations between the dependent and independence=y variables.

Strength of impact of independent variables on dependent variables.

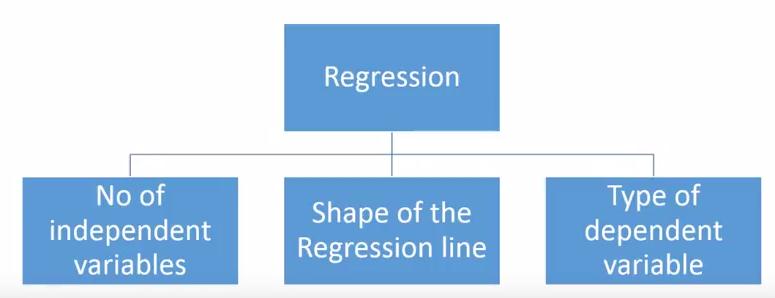

There are various regression techniques are there to make the predictions. These are divided by based on

1. No . of independent variables.

2. Type of dependent variable.

3. Shape of regression line.

Types:

1.Linear Regression.

2.Logistic Regression.

3.Polynomial Regression.

4. Stepwise Regression.

5.Ridge Regression.

6.Lasso Regression.

7. Elasticnet Regression.

1. Linear Regression:

Whenever you want to do the predictive modelling the people will pick this one , in this the independent variable is continuous or discrete and the dependent variable is continuous.

The Linear regression establish the relationship between dependent and one or more independent variables using a regression line.it can be represented as y=mx+c here m is slope and c is the error term.

Here we are having only one independent variable so it is called Linear regression if you have more then one independent variables it is called multiple linear regressions. But the multiple linear regression suffers from the multicolinearity , heteroskedasticity and auto corelation.

The most common method is used to fit the best-fit line by using Least-Square-method by minimizing the error.

2. Logistic Regression:

Logistic regression is used when the outcome is binary [yes/no , true/false , 0/1]. And also used in find the probability of an event is success or failure.here the outcome values are represented in 0 to 1 by following equation.

Its mainly used in classification problems.it can handle ant type of relationships here there is no need to follow the relationship between dependent and independent variables.in this the independent variables are independent with each other so in this there is no multi co-linearity and the dependent variable is ordinal it is called as ordinal logistic regression and the dependent variable is multi class then it is called mutinomial logistic regression.

3. Polynomial Regression:

The power of the independent variable is more then 1 it is called polynomial regression.in this the best fit line is not a straight line it’s a curvy shape to fit the data points.

Y=(mx)^2+c

4. Stepwise regression:

Stepwise regression mainly deals with multiple independent variables and in this the selection of independent variables is a automatic process without human intervention.basically it fits the regression by adding or droping the co-variate. Here some types of stepwise regression methods are

Standard stepwise regression is used to add or drop the independent variables as needed for each step.

Forward selection starts with the significant predictor and adds the variables.

Backward selection starts with all predictors and remove the least significant variables.

5. Ridge Regression:

This is one of the method of regularization technique which the data suffers from multicolinearity. In this multicolinearity ,the least squares are unbiased and the variance is large and which deviates the predicted value from the actual value.in this the equation also have an error term.

Y=mx+c+error term

Prediction errors are occurred due to bias and variance in this the multicolinearity are reduced by using lamda function.

In this there is no feature selection and it shrinks the value but does not reaches the zero. It is also called as L2 regularization technique.

6. Lasso Regression:

It is similar to the ridge regression , the Lasso (Least Absolute Shrinkage and Selection Operator) it is penalizes the absolute size of regression coefficients and it reduces the variability and increase the accuracy.

It shrinks the value exactly zero and helps to feature selection.and it is also called as L1 regularization. If number of predictors are highly correlated then the lasso picks only and remaining will take it as zero.

7. Elasticnet regression:

It’s a combination of both ridge and lasso techniques . it is very useful when the number of independent variables are correlated. Lasso only picks one randomly but elastic net picks both.

But the problem is it suffers with double shrinkage.

From all the above types we can choose the best one based on dependent variables , independent variables and dimensionality of the data.