What are the different metrics that will be used in Linear Regression to check the accuracy of the model

Mean Absolute Error/L1 Loss

Mean absolute error is measured as the average of sum of absolute differences between predictions and actual observations. It measures the magnitude of error without considering their direction. MAE needs more complicated tools such as linear programming to compute the gradients. MAE is more robust to outliers since it does not make use of square.

MAE gradients with respect to the predictions. The gradient is a step function and it takes -1 when Y_hat is smaller than the target and +1 when it is larger.

The gradient is not defined when the prediction is perfect, because when Y_hat is equal to Y, we cannot evaluate gradient. It is not defined.

Mean Bias Error

Mean Bias Error Mean Bias Error is same as MSE. The only difference that we don’t take absolute values. We need caution as positive and negative errors which could cancel each other out, it could determine if the model has positive bias or negative bias.

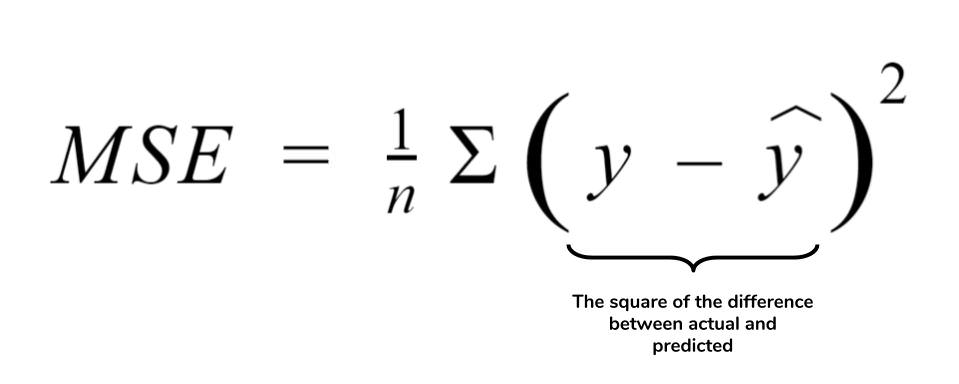

MSE (Mean Squared Error) represents the difference between the original and predicted values extracted by squared the average difference over the data set. The Mean Squared Error (MSE) is a measure of how close a fitted line is to data points. The smaller the Mean Squared Error, the closer the fit is to the data. The MSE has the units squared of whatever is plotted on the vertical axis.

Root Mean Squared Error (RMSE)

RMSE is just the square root of MSE. The square root is introduced to make scale of the errors to be the same as the scale of targets.

They are similar in terms of their minimizers, every minimizer of MSE is also a minimizer for RMSE and vice versa because the square root is a non-decreasing function.