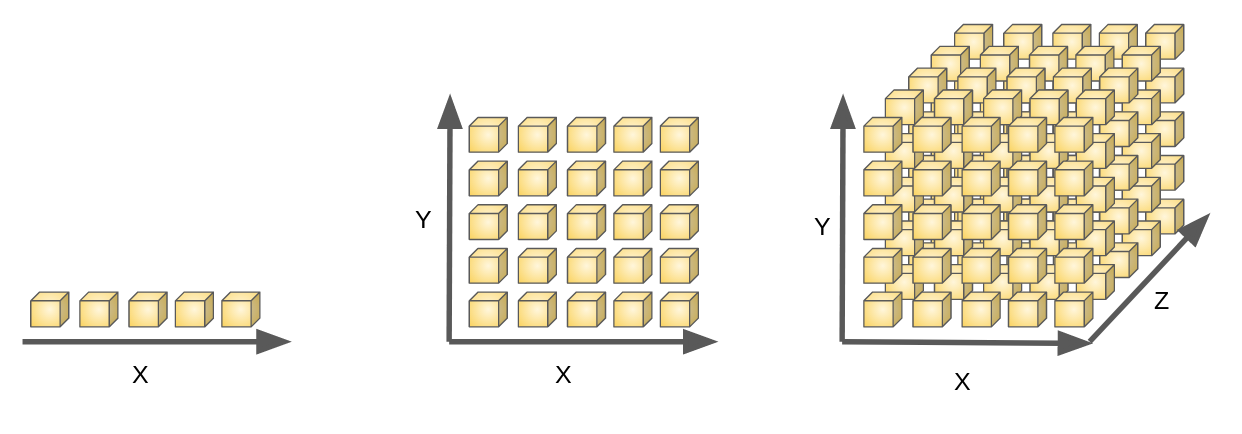

What do you mean by Curse of Dimensionality? What are the different ways to deal with it?

When the data has too many features, then we want to reduce some of the features in it for easy understanding and execution of the data analysis. This is called Curse of Dimensionality. This can be reduced by Dimensionality Reduction.

As the number of predictors or dimensions or features in the dataset increase, it becomes computationally more expensive and exponentially more difficult to produce accurate predictions in classification or regression models.

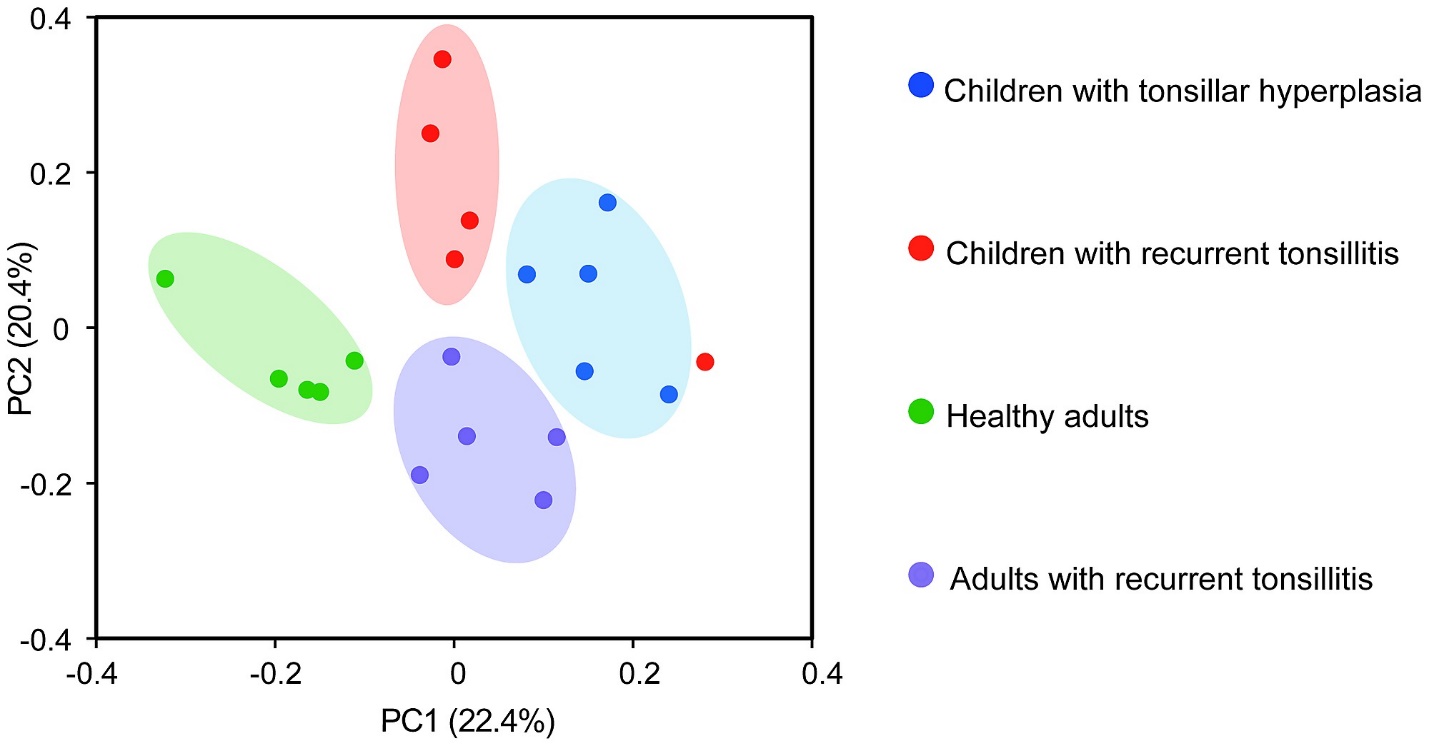

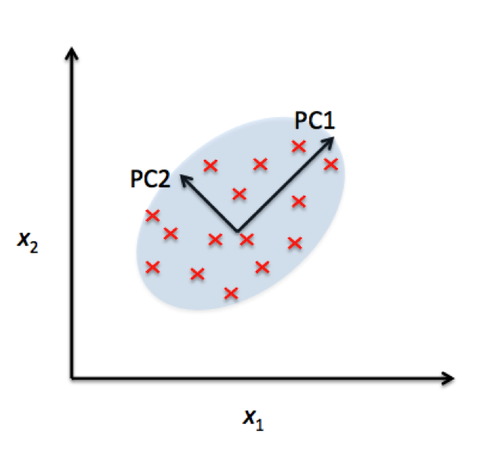

PCA reduces the dimensions of a d-dimensional dataset by projecting it onto a k-dimensional subspace, where k < d.

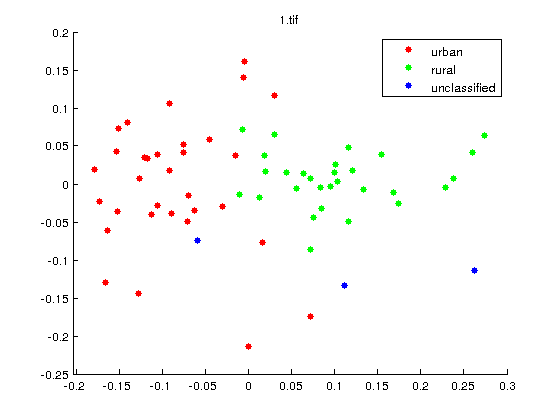

LDA extracts the k new independent variables that maximize the separation between the classes of the dependent variable.

PCA and LDA are the two methods used to reduce the dimensions or features of the data. Through which we can solve the problem of Curse of Dimensionality.